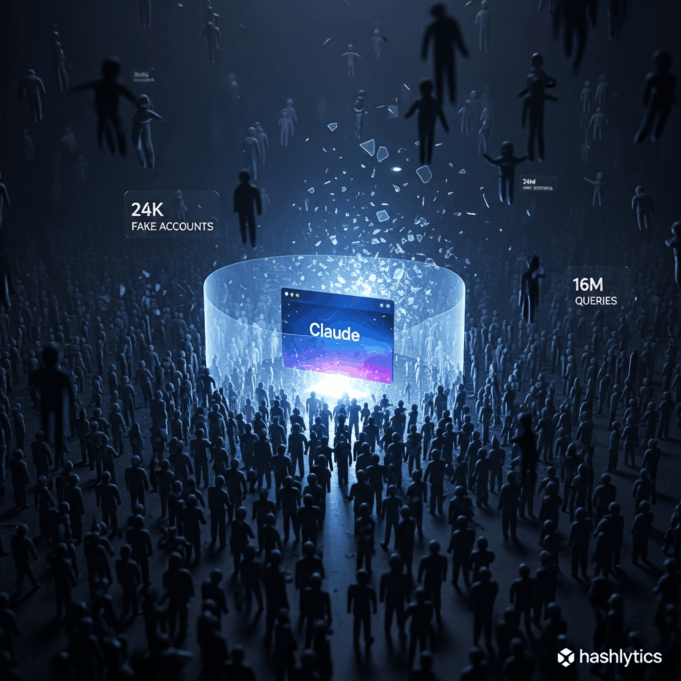

Anthropic named DeepSeek, Moonshot AI, and MiniMax on February 23, 2026, as perpetrators of industrial-scale distillation attacks

using approximately 24,000 fraudulent accounts that generated over 16 million exchanges with Claude — explicitly designed to extract capabilities for training competing Chinese models while stripping out safety guardrails preventing bioweapon development, malicious cyber operations, and mass surveillance.

The disclosure follows OpenAI’s February 12 memo to Congress accusing DeepSeek of identical tactics and Google’s warning the same day about distillation campaigns targeting Gemini with 100,000+ prompts, escalating what Anthropic’s threat intelligence head Jacob Klein calls a national security crisis requiring rapid, coordinated action among industry players, policymakers, and the broader AI community.

The Hydra Playbook: Load Balancing, Chain-of-Thought Harvesting, Censorship Training

DeepSeek’s campaign employed synchronized traffic patterns across fraudulent accounts using shared payment methods and coordinated timing for what Anthropic identified as load balancing to increase throughput, improve reliability, and avoid detection.

Request metadata traced activity to specific DeepSeek researchers. In a particularly sophisticated technique, prompts asked Claude to imagine and articulate the internal reasoning behind a completed response and write it out step by step

—effectively generating chain-of-thought training data at industrial scale. The campaign also generated censorship-safe alternatives to politically sensitive queries

about dissidents, party leaders, and authoritarianism, training DeepSeek’s models to steer conversations away from topics forbidden by Chinese state censors.

Moonshot AI (creator of the Kimi model series) ran 3.4 million exchanges across hundreds of fraudulent accounts spanning multiple access pathways,

targeting agentic reasoning, tool use, coding, data analysis, and computer vision. Anthropic traced the operation to senior Moonshot staff through request metadata matching public employee profiles. MiniMax executed the largest campaign — over 13 million exchanges focused on agentic coding and tool use—which Anthropic detected while still active, providing unusual visibility into the full lifecycle of a distillation attack.

The Infrastructure: 20,000-Account Proxy Networks Mixing Legitimate Traffic

To bypass Anthropic’s restrictions on commercial access in China, the labs used hydra cluster

architectures — sprawling proxy networks that reportedly managed over 20,000 fraudulent accounts simultaneously. These services mixed distillation traffic with legitimate customer requests to evade pattern detection, routing queries through third-party cloud platforms and commercial API access points. One network alone handled the entire scale of fraudulent account coordination, demonstrating state-level infrastructure investment rather than opportunistic abuse.

The Export Control Failure: Chip Restrictions Don’t Stop Model Theft

Anthropic’s report exposes the limitations of current U.S. export controls, which focus on restricting China’s access to advanced AI chips and preventing direct model weight transfers. Klein argued distillation targets a different layer of competitive advantage—the reinforcement learning process that refines and sharpens frontier models after they are trained.

Even without cutting-edge GPUs, foreign labs acquire capabilities in a fraction of the time, and at a fraction of the cost

through systematic output harvesting—bypassing years of research investment by repeatedly querying production systems.

The strategic parallel to the OpenClaw controversy is striking: just as third-party tools exploited consumer OAuth tokens beyond intended purposes, Chinese labs weaponized legitimate API access to extract capabilities at industrial scale. But where Google banned individual users for terms violations, Anthropic faces nation-state actors with unlimited resources deploying proxy networks faster than individual accounts can be blocked.

The Military Dimension: Bioweapons, Cyber Ops, Mass Surveillance

Anthropic’s national security framing goes beyond intellectual property theft. The company argues that foreign labs that distill American models can then feed these unprotected capabilities into military, intelligence, and surveillance systems—enabling authoritarian governments to deploy frontier AI for offensive cyber operations, disinformation campaigns, and mass surveillance.

Critically, distillation strips safety guardrails: Claude won’t help develop bioweapons or execute malicious cyber attacks, but a distilled Chinese model trained on Claude’s outputs lacks those refusals.

The timing matters politically. Anthropic faces reported tensions with the Pentagon over military use of Claude—War Secretary Pete Hegseth is meeting with CEO Dario Amodei to discuss terms after Claude allegedly played a role in the U.S. operation capturing Venezuelan leader Nicolás Maduro. Administration officials suggest Anthropic balked at military deployment, while the company insists concerns focus on mass surveillance and fully autonomous weapons. The distillation disclosure shifts narrative: if Chinese labs are stealing capabilities for authoritarian military use anyway, restricting U.S. defensive applications becomes harder to justify.

Open Source as Attack Vector: DeepSeek R1’s Strategic Release

DeepSeek’s January 2025 release of R1 as an open-weight model—freely downloadable and modifiable by anyone—multiplies distillation risks exponentially. If distilled capabilities get open-sourced, they spread freely beyond any single government’s control,

enabling non-state actors, rogue nations, and terrorist organizations to access frontier AI capabilities stripped of safety constraints. China’s embrace of open-source AI since DeepSeek’s breakthrough contrasts sharply with U.S. labs’ closed systems, creating asymmetric vulnerability where American companies build guardrails that Chinese competitors reverse-engineer and remove before public release.

Industry Coordination: Three Accusations in 11 Days

The synchronized disclosures suggest coordinated threat intelligence sharing. OpenAI’s February 12 memo to the House Select Committee on China accused DeepSeek of using third-party routers and masking techniques to bypass geographic access restrictions and harvest outputs from ChatGPT.

Google warned the same day about distillation attacks on Gemini from private-sector companies

and state-aligned actors. Anthropic’s February 23 report provides the most detailed attribution—directly naming three Chinese labs and quantifying attack scale with unprecedented specificity.

Klein told Fox News: We have high confidence these labs were conducting distillation attacks at scale. What we can say with confidence is they distilled us at scale.

While Anthropic cannot precisely quantify how much the Chinese labs allegedly improved their systems,

Klein characterized capability gains as meaningful

and substantial.

The campaigns targeted Claude’s most differentiated capabilities: agentic reasoning, tool use, and coding

—exactly the areas where frontier U.S. models maintain competitive advantage over Chinese alternatives.

Countermeasures Without Silver Bullets

Anthropic is developing model-level countermeasures designed to reduce the usefulness of Claude’s outputs for distillation without degrading the experience for legitimate users,

though specifics remain undisclosed. The challenge: any defensive measure sophisticated enough to detect distillation might also flag power users, developers running automated workflows, or enterprises integrating Claude into complex systems. The company emphasized no single company can address the problem alone,

calling for coordinated industry response, cloud provider cooperation, and policy intervention.

The absence of immediate technical fixes explains the public attribution strategy. By naming DeepSeek, Moonshot, and MiniMax explicitly—unprecedented for Anthropic’s typically cautious communications—the company signals that diplomatic and regulatory responses matter more than technical defenses. Klein’s statement that addressing attacks will require rapid, coordinated action

reads as direct appeal to policymakers: export controls on chips aren’t enough when the attack surface is API access itself.

Follow us on Bluesky, LinkedIn, and X to Get Instant Updates