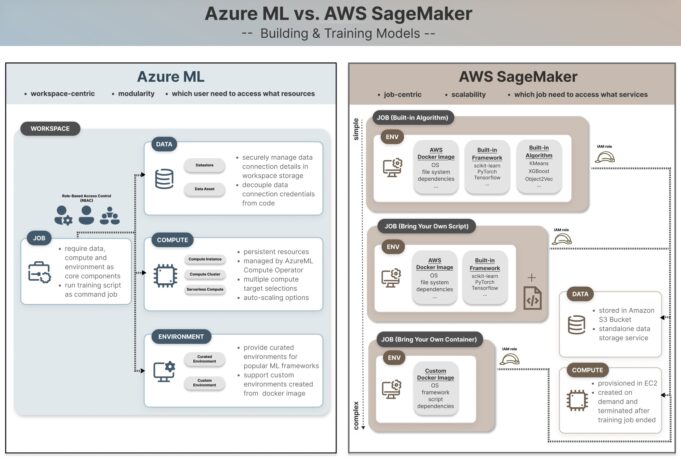

Architectural Differences

| Component | Azure ML | AWS SageMaker |

|---|---|---|

| Design Philosophy | Modular: Compute, Data, Environment managed as independent assets | Job-centric: Services orchestrated together for ML execution |

| Compute Model | Persistent, workspace-centric resources | On-demand, job-specific provisioning |

| Environment Customization | Curated environments for popular frameworks; custom Docker images | Three-tiered: Built-in Algorithms, Bring Your Own Script, Bring Your Own Container |

| Training/Deployment | Shared environment model | Distinct separation between training and deployment |

Azure ML: Built for Accessibility

Azure ML’s modularity significantly lowers the barrier to entry. Data scientists can configure compute clusters, data stores, and software environments as separate, understandable components. This decoupling simplifies experimentation and is less intimidating for teams where MLOps is not yet a core competency.

The platform provides curated environments for frameworks like PyTorch and TensorFlow, plus support for custom Docker images. This focus on independent assets makes it faster to get started on projects without requiring deep cloud infrastructure expertise.

SageMaker: Engineered for Production Scale

SageMaker’s integrated, job-centric model is purpose-built for teams with high MLOps maturity. By treating training as an orchestrated job with ephemeral compute and environment, it provides the robust separation and scalability required for automated CI/CD pipelines and large-scale distributed training.

The three-tiered customization model offers a clear progression: Built-in Algorithms for simplicity, Bring Your Own Script (BYOS) for framework-specific code, and Bring Your Own Container (BYOC) for maximum control using custom Docker images pushed to ECR.

This approach offers granular control over infrastructure but comes with a steeper learning curve, requiring solid understanding of interconnected AWS services like IAM roles, S3, and ECR.

The Tradeoffs in Practice

SageMaker’s complexity enforces best practices for reproducibility and production readiness from the outset. The clear separation of training and deployment prevents environment drift and other issues that arise in more persistent, stateful systems.

However, labeling Azure ML as merely beginner-friendly

undersells its flexibility. The modular design can be advantageous for complex research scenarios where components need independent management, without being locked into a single, monolithic job definition.

Azure’s persistent compute model is analogous to a dedicated workstation. SageMaker’s on-demand compute is like requisitioning a specialized factory for a single production run. Each model optimizes for different priorities: iteration speed versus isolation and scalability.

What to Watch

The key trend is potential convergence. Watch for AWS to introduce higher-level abstractions and managed workflows to simplify the SageMaker user experience. Simultaneously, monitor Azure for more integrated, job-centric orchestration tools that cater to mature MLOps teams seeking SageMaker’s rigorous pipeline control.

The evolution of serverless ML compute options on both platforms, like Azure’s serverless compute, will also shape the future cost and management overhead of model training.

Choose Azure ML for rapid experimentation and teams without deep cloud infrastructure expertise. Its modular architecture prioritizes ease of use and flexibility.

Choose AWS SageMaker for large-scale, automated training pipelines where reproducibility and infrastructure control are paramount. Its job-centric system is built for MLOps maturity.

Environment customization is the key technical differentiator. SageMaker’s three-tiered model offers a clear path from simplicity to full control, whereas Azure focuses on curated and custom Docker environments without the built-in algorithm shortcut.

Follow us on Bluesky , LinkedIn , and X to Get Instant Updates