Pentagon Designates First U.S. AI Company as Security Risk

Defense Secretary Pete Hegseth labeled Anthropic a supply-chain risk after negotiations collapsed over the company’s refusal to allow unrestricted military use of Claude. Anthropic CEO Dario Amodei stated the company would not permit its models for mass surveillance of U.S. citizens or fully autonomous weapons systems, positions Defense Department officials described as creating operational unpredictability.

The designation marks the first time a U.S.-based AI company has received this classification, which typically applies to foreign technology vendors like Huawei. The action requires defense contractors and government agencies working with the Pentagon to certify they do not use Anthropic’s technology. President Trump separately ordered all federal agencies to stop using Claude, affecting Treasury, State Department, and Health and Human Services deployments.

Anthropic announced it will challenge the designation in court, calling it “unlawful and politically motivated.” The company held a $200 million Pentagon contract awarded in July 2025 and had integrated Claude into classified defense networks through a partnership with AWS and Palantir launched in November 2024.

Cloud Providers Draw Commercial-Defense Boundary

Microsoft issued the first statement on March 6, confirming that legal review concluded Anthropic products “can remain available to customers other than the Department of War” through platforms including Microsoft 365, GitHub, and Azure AI Foundry. Google followed on March 7, stating the determination “does not preclude us from working with Anthropic on non-defense related projects” and that Claude remains accessible through Google Cloud Vertex AI.

Amazon, which has invested $8 billion in Anthropic since 2023, confirmed AWS customers can “continue to use Claude for all their workloads not associated with the Department of War.” The statement came despite Amazon’s deep integration with Anthropic, including a commitment to use 500,000 Trainium 2 chips and an $11 billion data center campus called Project Rainier dedicated to the AI company.

Technical and Operational Separation

Cloud providers are implementing security controls to enforce the commercial-defense split. Microsoft’s approach involves enhanced Azure Policy definitions that automatically apply different AI service catalogs based on tenant type and compliance requirements. Government cloud environments will operate under separate frameworks from commercial Azure services.

The separation addresses a fundamental tension between commercial AI deployment speed and defense procurement validation cycles. Defense contractors face complex compliance requirements as they must now verify which AI models meet Pentagon approval for government work while maintaining access to broader commercial AI capabilities for non-classified projects.

Pentagon Replacement Timeline

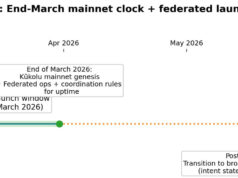

Defense sources indicated it could take 3-12 months to replace Claude’s capabilities on classified networks. Claude is one of only two large generative AI models the Pentagon deployed on classified systems and the only frontier-class model in that environment. A Defense Department official stated additional frontier AI models would become available on the GenAI.mil interface before summer 2026.

The complexity stems from training infrastructure dependencies. Anthropic trains Claude primarily on AWS Trainium chips, creating technical lock-in that would require months to replicate on alternative platforms. This mirrors challenges across frontier AI companies where long-term cloud partnerships create migration barriers.

Industry and Legal Implications

Constitutional law scholars noted potential First Amendment implications, as the designation restricts government contractors’ access to specific AI capabilities without clear judicial process. Legal analysts suggest Anthropic may have grounds to challenge whether the designation meets statutory requirements, which are intended to protect military systems from adversarial sabotage rather than resolve commercial contract disputes.

Dan W. Ball, senior fellow at the American Foundation for Innovation, characterized the Pentagon’s action as “attempted corporate murder,” arguing that sustained designation could force Google, Amazon, and NVIDIA to sever Anthropic relationships despite the companies’ legal determinations that commercial work remains permissible.

The situation creates a two-tier AI market where models face restrictions in federal contracts but remain commercially available. This fragmentation could slow technology transfer from private to public sector and complicate Allied defense procurement coordination. European and Asian partners who coordinate with U.S. defense now face pressure to align AI procurement policies, potentially creating separate technology stacks along geopolitical lines.

For commercial enterprises, cloud providers’ unified response ensures uninterrupted access to Claude through major platforms. Defense contractors must navigate the compliance landscape by segregating AI tools between classified and commercial work streams, adding operational complexity to multi-sector technology companies.

Follow Hashlytics on Bluesky, LinkedIn , Telegram and X to Get Instant Updates