Rather than treating upscaling as an optional performance feature, Nvidia’s latest iteration signals a move toward AI-assisted rendering as a baseline assumption. That shift has implications not just for content creators, but for how applications are optimized, deployed, and scaled across different classes of devices.

What changed most: DLSS 4.5 introduces deeper context awareness and more advanced use of pixel sampling and motion vectors. According to Nvidia, these improvements significantly raise image quality in Performance and Ultra Performance modes, narrowing the gap between AI-reconstructed output and native rendering. The result is an AI model that increasingly influences how applications are built, not just how fast they run.

- Platform: PC systems with Nvidia RTX GPUs

- Announcement: (CES 2026)

- Technology Type: AI-based real-time image reconstruction

- Developer: Nvidia

Nvidia revealed DLSS 4.5 during its GeForce On presentation at CES. Bryan Catanzaro, vice president of applied deep learning research at Nvidia, described the updated model as having “greater context awareness of every scene” and a more intelligent approach to combining motion vectors with pixel sampling. These refinements, he said, substantially improve output quality in the most aggressive upscaling modes.

At a technical level, AI upscaling works by rendering content at a lower internal resolution and using a trained neural network to reconstruct missing detail. The advantage is not limited to higher frame rates. By reducing raw rendering workloads, AI reconstruction makes advanced techniques like real-time ray tracing and path tracing feasible on hardware that would otherwise struggle with native rendering.

Nvidia has repeatedly emphasized that DLSS development prioritizes visual integrity, not just speed. Henry Lin, director of product management at Nvidia, noted that internal testing constantly benchmarks AI output against native rendering to ensure performance gains do not come at the cost of image quality.

As AI upscaling becomes more capable, it is also reshaping optimization strategies. Some developers and engineers have raised concerns that reliance on AI reconstruction could reduce incentives to optimize software for lower-end hardware. Wolfgang Wozniak has argued that over-dependence on upscaling risks turning DLSS into a shortcut rather than a complement to efficient design, particularly for systems that lack access to Nvidia’s hardware ecosystem.

That tension may ease as AI-capable GPUs become more widespread. With upcoming platforms such as the next-generation Nintendo Switch expected to integrate Nvidia silicon, hardware-level support for AI reconstruction is likely to extend beyond traditional high-end PCs. Analyst and AI researcher Tommy Thompson has pointed out that titles demonstrated on Nvidia-powered handheld hardware already show high frame rates and strong visual fidelity when DLSS is applied.

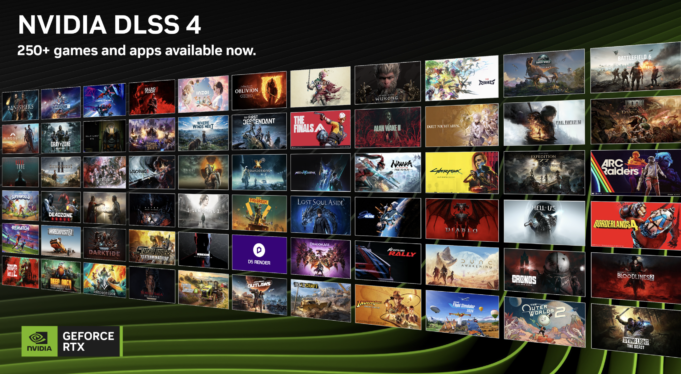

While alternatives like AMD’s FidelityFX Super Resolution and Intel’s Xe Super Sampling continue to evolve, Nvidia’s DLSS remains the most mature example of real-time, neural-network-driven image reconstruction deployed at scale. DLSS 4.5 reinforces Nvidia’s strategy of embedding AI directly into the graphics pipeline, blurring the line between rendering, optimization, and inference.

From a broader technology perspective, DLSS 4.5 highlights how AI is moving from an assistive role into foundational system design. What began as a performance workaround is now influencing how developers plan workloads, how hardware vendors define capabilities, and how future software stacks balance raw compute with intelligent reconstruction. AI upscaling is no longer a niche feature — it is becoming part of the default architecture for real-time graphics.

Follow us on Bluesky , LinkedIn , and X to Get Instant Updates