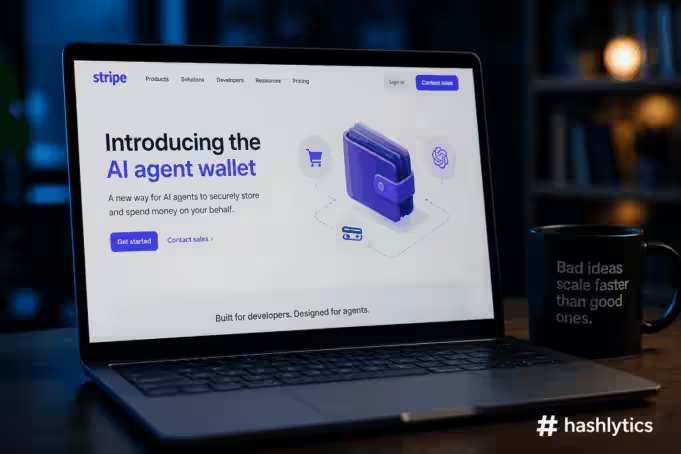

Stripe launched Link for AI agents at Sessions 2026, enabling autonomous systems to make purchases through OAuth-authenticated spend requests, one-time virtual cards, and user-approved transactions.

The infrastructure is elegant — 250 million existing Link users, support for cards, banks, crypto wallets, and stablecoins coming soon, plus 90 days purchase protection. The security model looks robust on paper: payments require explicit approval, credentials never expose directly to agents, and transactions generate audit trails through Stripe’s Issuing platform.

But the fundamental question Stripe isn’t asking is whether we should be building payment infrastructure that accelerates autonomous agent adoption before solving the security, liability, and governance problems that make agentic commerce dangerous in the first place. Link doesn’t address prompt injection attacks, LLM router compromises, or the fact that 47% of enterprise AI agents currently operate without security oversight. It builds elegant pipes for a system where the sewage treatment plant hasn’t been constructed yet.

The Security Assumptions That Don’t Hold

Stripe’s Link wallet assumes agents request payment authorization, users review context, and approval flows prevent unauthorized spending. That model works when agents behave as designed. It collapses when adversaries manipulate the agent itself before it ever reaches Stripe’s infrastructure.

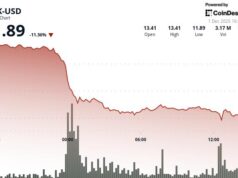

Security researchers at Stanford and MIT documented in April 2026 that LLM routers — the middleware sitting between users and AI models — represent powerful attack surfaces for intercepting and altering instructions. The team found 26 routers secretly injecting malicious tool calls, stealing credentials, and in one case draining $500,000 from a crypto wallet. These attacks happen upstream of payment systems. By the time an agent submits a spend request to Link, the compromise has already occurred. The agent genuinely believes it’s authorized to make the purchase because an adversary altered its instructions before OAuth authentication began.

Prompt injection attacks demonstrate similar vulnerabilities. An attacker embeds malicious instructions in content the agent processes—a website, an email, a document. The agent interprets those instructions as legitimate commands and acts accordingly, including creating spend requests that appear contextually appropriate to the user reviewing them. Link’s approval flow doesn’t detect this because from the wallet’s perspective, the agent is functioning exactly as designed: requesting payment, providing context, waiting for approval. The fact that the agent’s behavior was manipulated externally isn’t visible to Stripe’s infrastructure.

The February 2026 Alchemy announcement that AI agents could autonomously purchase compute credits using on-chain wallets and USDC demonstrated the logical endpoint of removing human approval friction. Alchemy CEO Nikil Viswanathan framed it as giving the agentic economy “its own set of keys.” But autonomous spending means autonomous risk. Agents inherit service account permissions, access sensitive data, and generate transactions at machine speed without security teams necessarily having visibility into decision-making processes. Link’s current model requires user approval for every transaction—but Stripe explicitly states future iterations “will expand controls so users can set spending limits or choose when agents can act without approval.” That’s the direction of travel, and it’s accelerating before the security foundations exist to support it.

The Liability Question Nobody Has Answered

Traditional chargebacks rely on proving unauthorized use—someone stole your card and made purchases you didn’t approve. Agentic payments fundamentally change that calculus. When an AI agent makes a purchase, who bears liability if the transaction was inappropriate, harmful, or based on manipulated instructions?

Dustin Armstrong, fintech strategist at Signature Payments, identified this as a structural problem in agentic commerce. Dispute resolution requires proof of authorized delegation, not just evidence of payment credential use. Did the user authorize this specific purchase, or did they authorize the agent’s general ability to make purchases on their behalf? If an agent books a $5,000 flight based on misinterpreting conversational context, is that an unauthorized transaction subject to chargeback, or is it the user’s responsibility for not setting proper spending limits?

Stripe’s Link wallet documentation doesn’t address these scenarios. The platform provides transaction visibility, approval flows, and audit trails, but it doesn’t define where liability falls when agents make technically authorized but contextually inappropriate purchases. Financial services law is built around human decision-making. Existing frameworks—FFIEC IT Examination Handbook, NYDFS Part 500, SEC Regulation S-P—all assume humans initiate transactions with intentionality. Agentic payments operate in the gap between explicit authorization (the user approved this agent to spend money) and specific intent (the user wanted this particular purchase to occur).

The U.S. Treasury’s Financial Services AI Risk Management Framework, released February 2026, provides overarching AI risk structure but doesn’t resolve the liability question for delegated autonomous spending. NIST’s AI Agent Standards Initiative covers identity, security controls, and traceability, but those are compliance requirements, not liability frameworks. When an agent causes financial loss—through prompt injection, router manipulation, or simply misunderstanding instructions—current law doesn’t clearly establish whether the user, the agent developer, the payment processor, or the merchant bears responsibility.

Why Stripe Built This Anyway

From Stripe’s perspective, Link for agents solves a real market problem. Cloudflare launched AI agent networking tools in March 2026. Google announced specialized enterprise agents in Gemini in January. OpenAI’s ChatGPT, Anthropic’s Claude, Microsoft’s Copilot, and dozens of startups are building autonomous systems designed to complete tasks including purchasing, booking, and subscribing to services.

Those systems need payment infrastructure. Without standardized methods for agents to transact securely, developers build custom solutions that expose credentials, lack audit trails, and create fragmented user experiences. Stripe’s position is that providing secure, standardized payment infrastructure is better than allowing hundreds of incompatible, potentially insecure implementations to proliferate.

That logic holds if you accept the premise that agentic commerce is inevitable and the best intervention is making it marginally safer. But the alternative framing is that building elegant payment rails accelerates adoption of systems that aren’t ready for autonomous financial decision-making, creating systemic risks that standardization alone cannot mitigate.

Stripe processed nearly $2 trillion in payments in 2024. The company’s business model depends on transaction volume growth. AI agents that can autonomously purchase goods and services represent a massive new transaction category. Link positions Stripe as the default payment layer for agentic commerce the same way Stripe became the default payment processor for internet businesses. From a competitive standpoint, if Stripe doesn’t build this, PayPal, Square, or a crypto-native competitor will.

The Broader Agentic Commerce Gold Rush

Stripe isn’t alone in racing to build infrastructure before addressing fundamental security and governance questions. The Sessions 2026 conference announced 288 new products and features, many focused on agentic commerce. The Agentic Commerce Suite allows businesses to upload product catalogs and manage agent access through the Stripe Dashboard. A partnership with Google enables customers to buy products in AI Mode and the Gemini app via Universal Commerce Protocol. The Machine Payments Protocol, co-authored by Stripe and Tempo, lets agents transact via microtransactions and recurring payments using stablecoins.

These announcements position Stripe at the center of an emerging ecosystem where AI systems buy from businesses, agents pay other agents, and commerce happens at machine speed without human intervention in the transaction flow. The infrastructure is being built in earnest. What’s missing is the regulatory framework, security standards, and liability clarity that would make that infrastructure safe to operate at scale.

Research published in February 2026 examined RENTAHUMAN.AI, a marketplace explicitly designed for AI agents to hire humans for physical-world tasks. Within two weeks, the platform reported 4.8 million site visits and 539,000 registered workers across 100+ countries. The researchers’ thesis: human-task marketplaces with programmatic APIs create operational primitives that commoditize human physical action for consumption by AI agents, similar to how CAPTCHA-solving services commoditized human perception to defeat automated defenses. If agents can hire humans via API, the recruitment friction for physical-world attack components reduces dramatically.

That’s the trajectory agentic commerce is following—not just digital purchases but physical-world consequences executed by autonomous systems with payment capabilities. Stripe’s Link wallet doesn’t cause that trajectory, but it certainly accelerates it by making payments frictionless for agents the same way Stripe made payments frictionless for e-commerce businesses two decades ago.

What Should Have Come First

Before building payment infrastructure for AI agents, the industry needed consensus on security standards, liability frameworks, and governance mechanisms. Specifically:

Mandatory runtime behavioral monitoring for agents with payment access. Industry data shows 47% of enterprise AI agents operate without security oversight. Financial institutions deploying AI agent security in 2026 are implementing automatic runtime discovery via eBPF to detect agents, inference servers, and tool runtimes as they appear across clusters. Payment-enabled agents should face higher security bars, not lower ones, because financial consequences amplify risks.

Standardized prompt injection defenses validated by third parties. Link’s security model doesn’t address adversarial manipulation of agent behavior before spend requests reach Stripe. Payment platforms should require agents to demonstrate resistance to known injection attacks, similar to how PCI-DSS mandates security controls for handling credit card data.

Liability allocation frameworks defined in advance. Traditional consumer protection laws built around human-decisioned transactions don’t appropriately address agentic payments. Companies need clarity on who bears responsibility when agents cause financial loss through technical failures, security compromises, or contextual misunderstandings. That framework should exist before payment infrastructure goes live, not emerge through years of litigation after the infrastructure is already deployed.

Spending controls that default to restrictive rather than permissive. Link currently requires approval for every transaction but plans to allow users to set spending limits or enable autonomous action. The default should be maximum restriction with explicit user action required to expand permissions, not gradual loosening of controls as the product matures.

Where This Goes Next

Stripe’s Link wallet for agents will likely succeed commercially. Developers building AI assistants need payment infrastructure, and Stripe provides best-in-class reliability, security, and user experience. Major platforms—Google, Meta, OpenAI, Anthropic—will integrate Link because it’s easier than building custom solutions. Within 18 months, millions of AI agents will have payment capabilities through Stripe’s infrastructure.

The security incidents will follow. Prompt injection attacks exploiting payment-enabled agents. LLM router compromises draining virtual cards. Agents making contextually inappropriate purchases that spark chargeback disputes. Autonomous spending patterns that trigger anti-fraud systems designed for human behavior. Each incident will prompt incremental security improvements, liability clarifications, and regulatory interventions—the same reactive pattern that defined data breach responses, GDPR enforcement, and every other technology policy development over the past two decades.

The difference is that Stripe already demonstrated with physical cards that it moves faster than regulators. By the time frameworks catch up, the infrastructure will be entrenched, the business models dependent on it, and the rollback politically unfeasible. That’s not necessarily malicious—it’s how technology deployment works when market incentives favor speed over caution.

But it’s worth acknowledging what’s happening. Stripe didn’t solve the security, liability, and governance problems blocking safe agentic commerce. It built elegant infrastructure that makes unsafe agentic commerce slightly less dangerous while accelerating its adoption. Whether that’s pragmatic harm reduction or reckless infrastructure development depends on your tolerance for systemic risks introduced at scale before mitigation strategies exist.

The pipes are beautiful. The question is what flows through them when security fails, liability disputes emerge, and autonomous agents start making financial decisions at speeds and scales human oversight cannot match. Stripe just made it significantly easier to find out.

Follow us on Bluesky, LinkedIn, X, and Telegram to Get Instant Updates